What Is GateRouter? A Guide To Web3 AI Model Routing And LLM Gateway

As AI applications and AI Agents continue to grow rapidly, developers increasingly need to access multiple AI models in order to achieve optimal performance across different task scenarios. However, these models are often provided by different vendors, each with its own interface standards, pricing structures, and capabilities. This fragmentation makes AI infrastructure management more complex.

GateRouter addresses this challenge by introducing a unified API access layer and an intelligent model routing mechanism. Through this system, requests can be automatically directed to the most suitable AI model based on factors such as task complexity, cost, and performance requirements. At the same time, GateRouter enables AI Agents to pay for API usage through cryptocurrency based automated payment mechanisms.

What Is GateRouter? Definition And Core Functions

As the core component of Gate for AI, GateRouter is not a new large language model itself. Instead, it functions as an intelligent routing and orchestration layer positioned between client applications and a wide range of global AI model providers. As a unified AI model router and LLM gateway, GateRouter allows developers and AI Agents to interact with multiple large language models through a single API interface.

Through GateRouter, developers no longer need to integrate APIs from different AI providers separately. Instead, they can access multiple models through a single entry point. This architecture simplifies development workflows while allowing systems to automatically select the most appropriate model for each request based on specific requirements. It also supports cryptocurrency based payments for API usage, enabling AI Agents to manage and pay for model access autonomously.

The core functions of GateRouter include:

| Function | Description |

|---|---|

| Multi Model Access | Access multiple AI models through a single API |

| Intelligent Model Routing | Automatically select models based on task complexity, cost, and latency |

| Automated Payment | Allows AI Agents to pay API usage fees with cryptocurrency |

| OpenAI API Compatibility | Supports seamless migration of existing AI applications |

In simple terms, GateRouter acts as both a routing layer and a payment layer for AI models, helping developers manage multi model AI infrastructure more efficiently.

What Is AI Model Routing?

AI model routing refers to the process of dynamically selecting which large language model should handle a particular request.

In traditional architectures, an application usually connects to a single model, such as a specific GPT model. However, different tasks require different levels of model capability. For example, simple text classification tasks, complex reasoning problems, code generation, and multilingual translation all place different demands on an AI system. If every request is handled by the most powerful model, operational costs can increase significantly. On the other hand, using a model that is too simple may reduce the quality of the results.

AI model routing addresses this challenge by automatically selecting the most suitable model for each task. This approach helps achieve a balance between performance and cost efficiency.

How AI Model Routing Works

An AI model routing engine evaluates each incoming request and determines which model should process it. The routing decision is typically based on several factors.

Common evaluation criteria include request complexity, which determines whether the task requires advanced reasoning, the capability of each available model for specific tasks, response latency, and token pricing, which represents the cost of processing the request.

For example, relatively simple tasks such as text summarization may be routed to a faster and less expensive model. More complex reasoning tasks, such as code generation, may be assigned to a more advanced model with stronger analytical capabilities. This dynamic routing mechanism helps maintain output quality while significantly reducing the overall operational cost of AI systems.

How Do AI Agents Pay For API Requests?

One notable feature of GateRouter is its ability to support automated cryptocurrency payments for API usage. This mechanism allows AI Agents to pay for model requests without human intervention, creating a self contained economic loop for automated systems.

The payment process typically follows these steps:

-

The AI Agent sends a request to the API

-

The API responds with an HTTP 402 Payment Required status

-

The response includes pricing information for the request

-

The agent automatically completes payment using supported tokens such as USDC or USDT

-

After payment is confirmed, the API returns the model response

This mechanism enables AI Agents to independently access paid AI services without requiring manual payment steps. It also supports machine to machine payment systems, pay as you go usage models without preloaded balances, and automated AI applications that need to operate continuously.

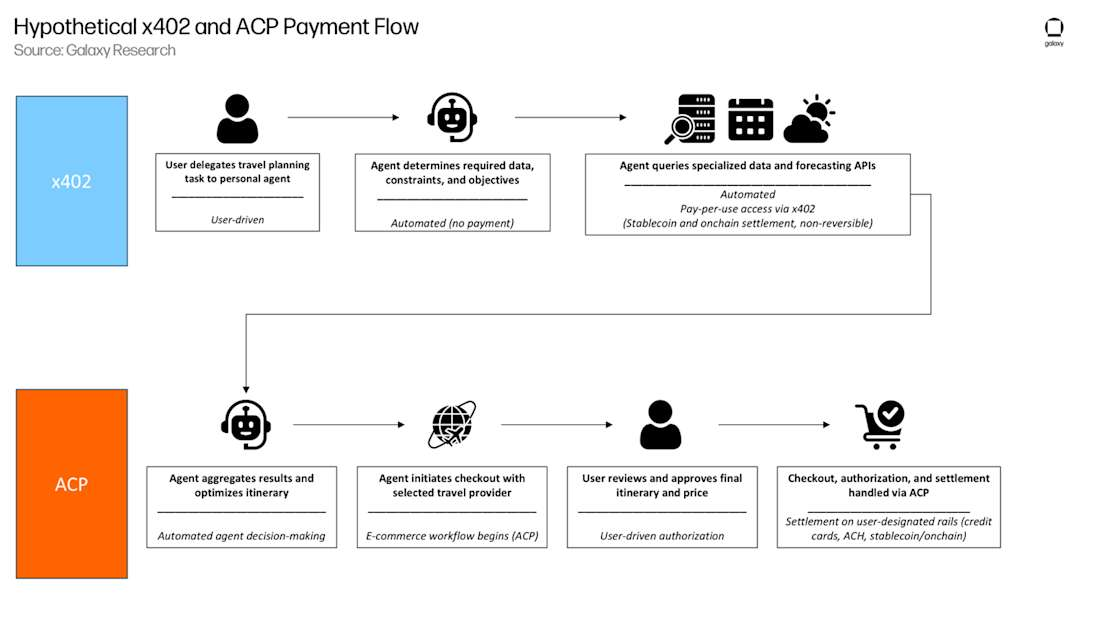

What Is The x402 Protocol?

The x402 protocol is an open standard designed to enable automated API payments. It expands the traditional HTTP 402 Payment Required status code by adding machine readable payment instructions.

The key innovation of the x402 protocol is that it transforms payment into a standardized interface that machines can understand and execute. When an AI system needs to access a paid API or complete a transaction, it does not need to follow traditional human payment procedures such as account registration, credit card verification, or manual approval. Instead, payment instructions can be embedded directly within the HTTP request and settled instantly using digital assets such as stablecoins.

Image source: Galaxy Research

Image source: Galaxy Research

By integrating support for the x402 protocol, GateRouter allows applications and AI Agents to automatically detect service pricing and complete real time payments. This approach improves transaction security while enabling a flexible pay as you go model. It also removes the complexity associated with traditional prepaid accounts and manual balance management.

Which AI Models Are Supported By GateRouter?

As part of the Gate for AI ecosystem, GateRouter aims to build a neutral and comprehensive model access environment. Developers can use GateRouter’s unified endpoint to access a wide range of AI models provided by leading global vendors. These include, but are not limited to:

-

GPT series: Commonly used for general natural language processing tasks such as text generation, summarization, and conversational AI.

-

Claude series: Known for strong long context understanding and safety oriented model design.

-

Gemini: Designed with advanced multimodal capabilities, allowing the processing of text, images, and other input formats.

-

Other open source models: Models such as DeepSeek and Llama provide developers with cost efficient alternatives for certain workloads.

Through GateRouter, developers do not need to integrate each model provider separately. Instead, they can access multiple models through a single API endpoint. This unified approach allows easier integration, more flexible model switching, and reduced development and maintenance costs.

Is GateRouter Compatible With The OpenAI API?

GateRouter provides API endpoints that are compatible with the OpenAI API format. This means developers can connect their existing applications to GateRouter with minimal code changes while gaining access to multiple AI models through the same interface.

The compatibility advantages include:

-

Existing AI application code does not need to be rewritten

-

Current API workflows can remain unchanged

-

Model providers can be switched automatically in the background

For developers who are already using the OpenAI API, this compatibility significantly reduces the cost and complexity of migration while expanding access to a broader range of AI models.

GateRouter vs OpenRouter

Both GateRouter and OpenRouter are AI model routing platforms that allow developers to access multiple large language models through a unified API. However, the two platforms differ significantly in areas such as payment mechanisms, AI agent support, and Web3 integration.

| Comparison Dimension | GateRouter | OpenRouter |

|---|---|---|

| Core Positioning | AI model router with crypto payment gateway | AI model routing platform |

| API Access | Single API for multiple models | Single API for multiple models |

| Supported Models | GPT, Claude, Gemini, DeepSeek, Llama | GPT, Claude, Gemini, Mistral and others |

| Payment Method | Cryptocurrency based automated payments using x402 | Traditional account based billing |

| AI Agent Payments | Supports machine to machine automated payments | Does not support native automated payments |

| Web3 Integration | Natively integrated with Web3 infrastructure | Limited Web3 integration |

| OpenAI API Compatibility | Supported | Supported |

Overall, GateRouter focuses more on integrating AI infrastructure with Web3 automated payment systems, while OpenRouter primarily acts as a platform for aggregating access to multiple AI models.

Potential Use Cases For GateRouter

GateRouter can help developers manage multiple large language models while supporting intelligent routing, automated payments, and multi model collaboration. These capabilities make it suitable for several types of AI applications.

AI Agents

AI agents often need to continuously interact with multiple AI models in order to complete complex tasks such as information analysis, content generation, or automated decision making. GateRouter provides a unified access layer that allows these agents to switch between models depending on the task.

In such scenarios, GateRouter can enable:

-

Automatic selection of the most suitable AI model for each task

-

Dynamic routing of requests based on cost and performance considerations

-

Automated payment of API usage through the x402 protocol

DeFi And AI Applications

Within the Web3 ecosystem, AI is increasingly used for DeFi data analysis, market monitoring, and trading strategy development. Potential DeFi and AI applications include automated DeFi analytics agents, market sentiment monitoring systems, and AI driven strategy generation tools.

GateRouter can support these applications by providing access to multiple AI models for analysis, automated data processing capabilities, and API calls that can be paid using cryptocurrency. Because the platform supports stablecoin based payments, it is particularly suitable for Web3 native AI applications.

Autonomous Services

Another emerging trend in the digital economy is the development of autonomous services that operate continuously without direct human intervention. These systems may automatically call AI models whenever certain conditions are met.

By combining model routing with automated payment mechanisms, GateRouter can support infrastructure for fully automated AI services. In such environments, the platform can enable:

-

Automatic access to AI services

-

A pay as you go API usage model

-

Machine to machine payment capabilities

Conclusion

GateRouter is a multi model routing platform designed for AI applications and AI agent ecosystems. By providing a unified API that connects to multiple large language models and applying intelligent routing mechanisms, GateRouter helps developers optimize the performance and cost efficiency of their AI infrastructure.

At the same time, the platform integrates support for the x402 protocol and cryptocurrency based payment mechanisms. This allows AI agents to automatically pay for API usage without human intervention, enabling more advanced automation scenarios.

As the concept of an AI agent economy continues to develop, infrastructure platforms such as GateRouter may play an important role in connecting AI models, developers, and automated payment systems within the evolving digital ecosystem.

FAQs

What Is The Main Purpose Of GateRouter?

GateRouter is an AI model routing platform that allows developers and AI agents to access multiple large language models through a single API interface. The system automatically selects the most suitable model for each request based on task requirements, helping optimize performance and cost efficiency.

How Is GateRouter Different From A Traditional AI API Gateway?

Traditional AI API gateways mainly focus on request management and access control. GateRouter extends this concept by adding intelligent model routing and automated payment capabilities. This allows requests to be dynamically distributed across multiple AI models while supporting automated payment for API usage.

Is GateRouter Only Designed For AI Agents?

No. Although GateRouter supports automated API calls by AI agents, it is also suitable for general AI applications developed by individuals or teams. These applications may include chatbots, data analysis tools, content generation systems, or other AI powered services.

Which AI Models Are Supported By GateRouter?

GateRouter supports large language models from multiple providers, including GPT, Claude, Gemini, DeepSeek, and Llama. Developers can access these models through a unified API endpoint without integrating each provider separately.

Why Does GateRouter Support Cryptocurrency Payments?

GateRouter supports cryptocurrency payments through the x402 protocol, allowing AI agents to automatically pay for API requests. This mechanism is particularly suitable for machine to machine payment scenarios and enables more flexible payment models for Web3 based AI applications.

Can Individual Developers Use GateRouter?

Yes. GateRouter is compatible with the OpenAI API, making it suitable for individual developers or startup teams that want to quickly integrate multi model AI capabilities into their applications.

Related Articles

Blockchain Profitability & Issuance - Does It Matter?

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

An Overview of BlackRock’s BUIDL Tokenized Fund Experiment: Structure, Progress, and Challenges

What is AIXBT by Virtuals? All You Need to Know About AIXBT